State AI Legislation Focuses on Disclosure and Transparency Mandates

Key Takeaways

AI disclosure requirements in states have emerged as a practical first step in regulating artificial intelligence, requiring companies and creators to inform consumers when AI has been used to generate content or make decisions.

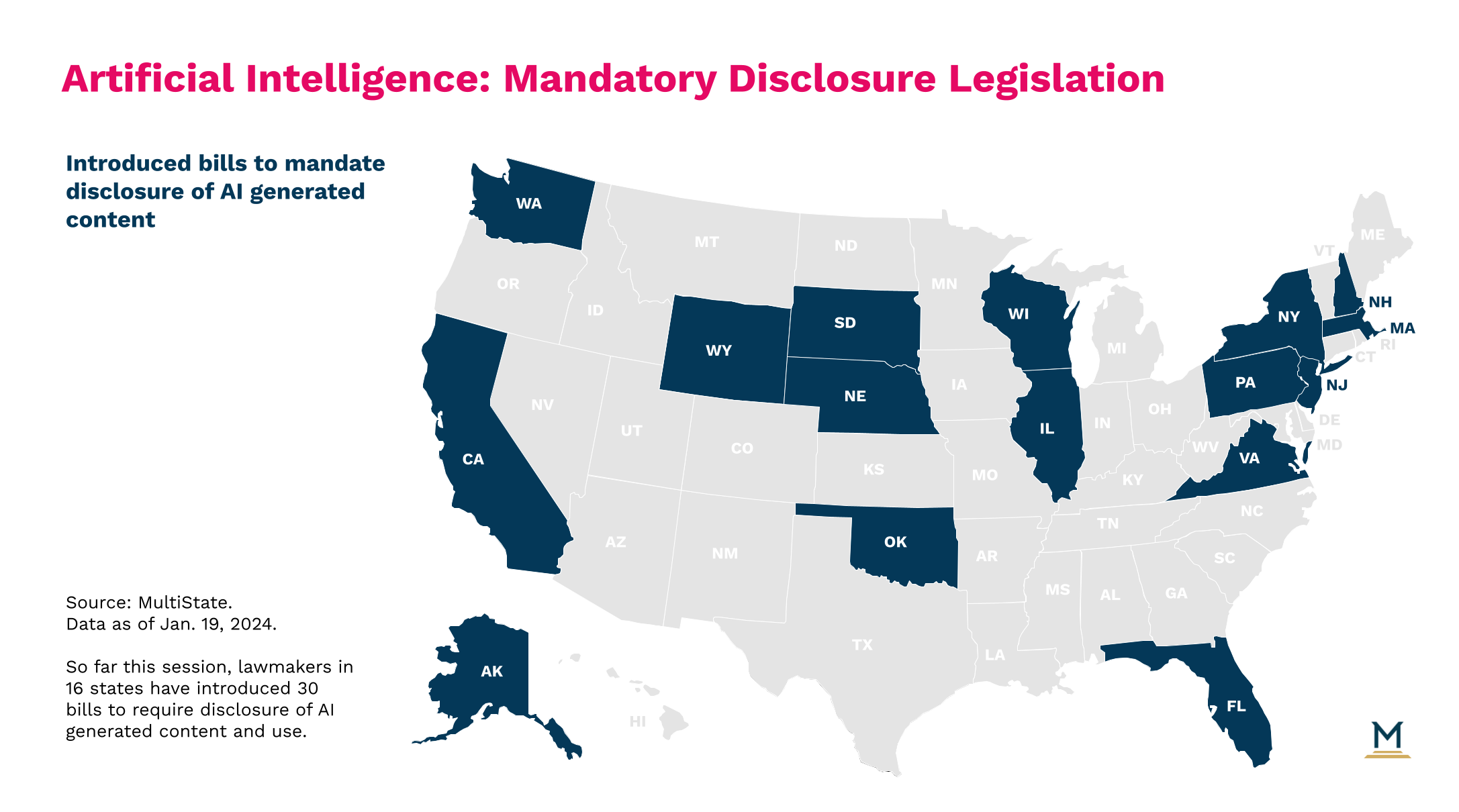

Washington and Michigan enacted laws requiring AI political advertising disclosure for manipulated media in campaigns, and similar bills have been introduced in Alaska, Florida, Nebraska, New Hampshire, Oklahoma, South Dakota, and Wisconsin this session.

State AI legislation on disclosure extends beyond elections, with California, Massachusetts, and Pennsylvania proposing requirements for AI-generated content across various contexts, while other states like Virginia and Illinois focus on content depicting actual persons or misleading material.

New York has introduced bills requiring disclosure specifically for AI-generated books and publications.

State AI transparency requirements also apply to AI decision-making tools, with California's proposed regulations and legislation requiring companies to notify consumers when AI is used to make consequential decisions affecting them.

The challenge with AI-generated content laws lies in defining when disclosure is triggered—for instance, determining whether using AI for brainstorming or minor edits constitutes "substantial" generation under laws like Michigan's.

If you're a subscriber, click here for the full edition of this update. Or, click here to learn more about our MultiState.ai+ subscription.

Policymakers face a tough challenge when it comes to regulating the fast-moving AI industry. However, disclosure requirements are one tool in their regulatory toolbelt that policymakers have embraced in the early stages of AI development. By mandating that you must disclose when you've used AI to generate media or an AI tool, policymakers thread the needle of providing useful information to consumers while not placing a substantial roadblock in front of the nascent industry's development. Recent reports of low-quality, AI-generated books and articles flooding the market highlight the need for consumers to know which products were created by people versus AI. Disclosure requirements are a logical first step as we all become accustomed to AI in our everyday lives.

AI Disclosure Requirements in Political Campaigns

The most straightforward case for mandatory disclosures on AI-generated content is in political campaigns, especially if a political candidate's image or voice is manipulated by AI and spread through social media. Voters already see disclosures in campaign ads under existing rules requiring a disclosure identifying the group responsible for political advertisements. States have been very active in requiring similar disclosures for AI-generated political advertising. Last year, Washington enacted a bill (WA SB 5152) to require a disclosure when any manipulated audio or visual media is used in an electioneering communication and Michigan enacted a law (MI HB 5141) requiring a disclaimer ("This message was generated in whole or substantially by artificial intelligence") for pre-recorded phone messages and political advertisements that were created with AI within 60 days of an election. Already this session, we've seen similar bills introduced in Alaska (AK SB 177), Florida (FL HB 919, HB 1459, SB 850, & SB 1680), Nebraska (NE LB 1203), New Hampshire (NH HB 1500), Oklahoma (OK SB 1655), South Dakota (SD SB 96), and Wisconsin (WI AB 664 & SB 644)

Expanding AI Disclosure Laws Beyond Elections

While we haven't seen mandatory disclosure laws enacted outside of the political context yet, this concept can easily expand to other areas.

General AI Content Disclosure Proposals

Lawmakers in California (CA AB 1824), Massachusetts (MA HD 4788), and Pennsylvania (PA HB 1598) have introduced legislation to require the disclosure of content generated through AI in general. Other bills require an additional qualification for the AI-generated content to require disclosure. For example, a bill in Virginia (VA SB 164) requires disclosure as long as the AI-generated content "depicts an actual person," bills in Illinois (IL HB 3285) and Wyoming add the requirement that the AI-generated content must be misleading, and a New Jersey bill (NJ AB 1604) requires disclosure only when the AI-generated content is "intentionally deceptive."

Medium-Specific Disclosure Requirements

Additional disclosure bills are limited to a specific medium. A New York bill (NY AB 8098 & SB 7922) would require disclosure only for books created wholly or partially with the use of generative AI and another bill (NY AB 8158 & SB 7847) would require disclosure for AI content in newspapers, magazines, and "other publications printed or electronically published" in New York. Finally, bills in Washington (WA SB 6073) and New York (NY AB 8110) would require disclosure when AI generation is used in legal proceedings.

AI Disclosure in Automated Decision Making

Beyond AI-generated media, disclosures are also a key aspect of proposed regulations on AI decision tools. In this context, a company using AI tools for decision making affecting a consumer would be required not only to disclose the use of AI in that decision but also proactively notify the consumer of that use. California's draft regulations proposed such a mechanism as well as major legislation (CA AB 331) introduced by Assemblymember Rebecca Bauer-Kahan (D), which would require notification of any person subject to a "consequential" decision by an AI tool.

Implementation Challenges and Future Concerns

The difficulty will be where to draw the line when defining when a disclosure requirement is triggered. For example, Michigan's AI in elections law requires a disclaimer when media "is generated in whole or substantially" by AI. But that "substantially" qualifier will require some interpretation. If a writer uses AI to brainstorm ideas for a newsletter or asks AI to generate the article's title, would that trigger a disclosure? What if an artist incorporates an AI-generated image into their own artwork, which is further modified by the artist? If a movie studio uses AI tools to edit a film shot without the help of AI, would that require disclosure?

Regardless of the answers to the above questions, we could see diminishing benefits of disclosure in the long run if AI is incorporated into more of our daily tasks. We could eventually become numb to a "generated with AI" disclosure as they become more ubiquitous in the same way that most people ignore the "known to cause cancer" warnings on everyday products mandated by California's Prop 65.

But for now, state lawmakers have embraced disclosure as a way to promote transparency as AI technology is infusing itself into our everyday lives. Expect lawmakers to utilize these types of disclosure and notification requirements as they expand their regulatory scope over AI technologies.

If you're a subscriber, click here for the full edition of this update. Or, click here to learn more about our MultiState.ai+ subscription.