AI Chatbot Regulation for Minors Gains Bipartisan Momentum in 2026

Key Takeaways

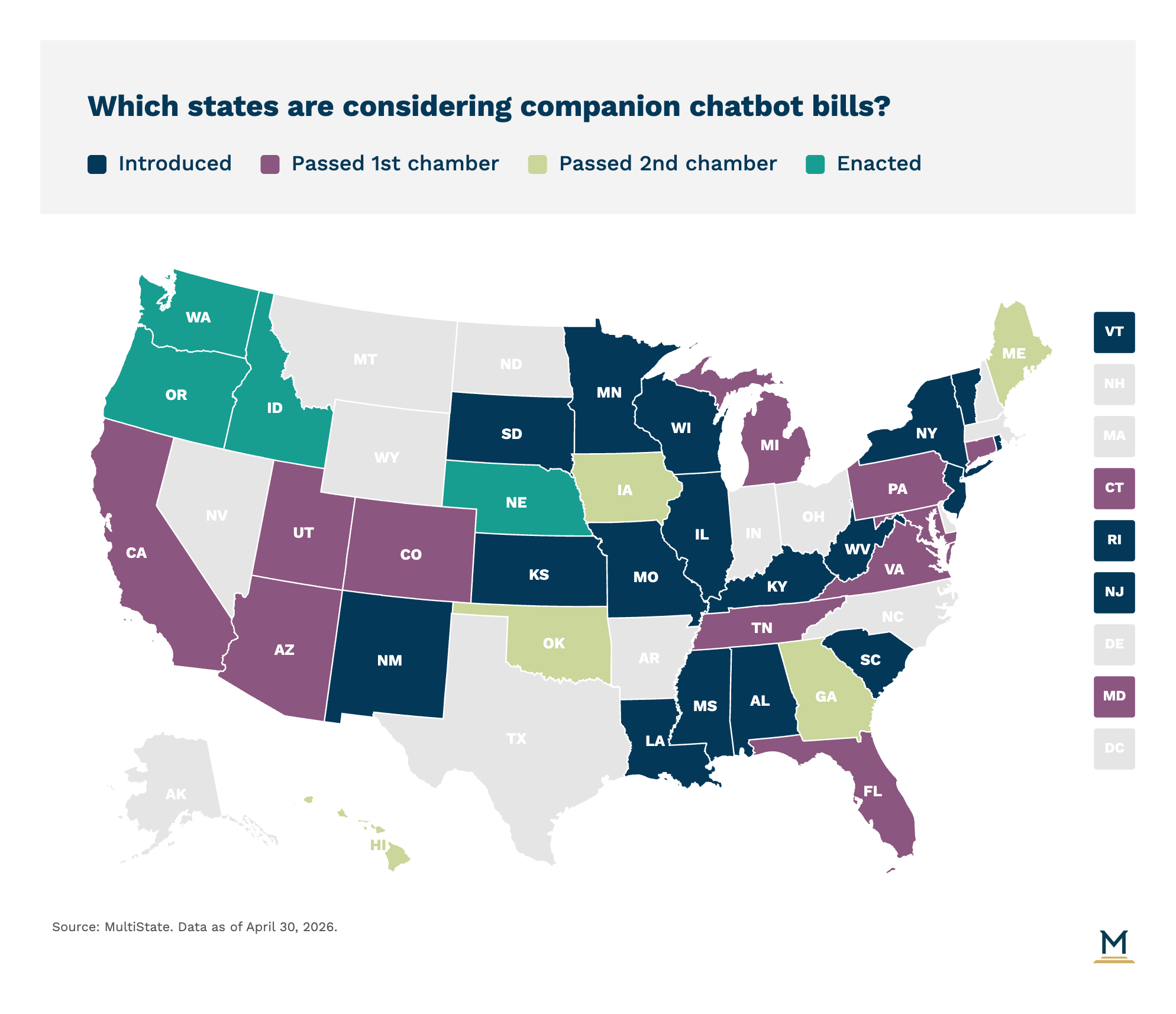

- State companion chatbot laws are moving forward in both red and blue states this year, with Idaho, Nebraska, Oregon, and Washington enacting regulations while several other states have bills awaiting governor signatures.

- Most companion chatbot legislation 2026 requires platforms to disclose that users are interacting with AI rather than humans, with blue states like New York and Washington requiring reminders every three hours during extended conversations.

- AI chatbot regulation minors includes restrictions on sexual content, requirements to prevent chatbots from simulating romantic relationships with children, and mandates for crisis intervention when users express self-harm.

- State AI child safety laws have received explicit support from the Trump Administration, which asked Congress not to preempt state regulations protecting children from harmful chatbot interactions.

- The Senate Judiciary Committee unanimously advanced the federal GUARD Act, which could establish national standards for companion chatbots and potentially preempt the current wave of state-level regulation.

- If you're a subscriber, click here for the full edition of this update. Or, click here to learn more about our MultiState.ai+ subscription.

High-profile incidents in the news have pushed companion chatbots into the policy spotlight, making it one of the most active areas of legislative attention in state tech policy this year. While federal preemption efforts have chilled other state efforts to regulate artificial intelligence, the Trump Administration explicitly asked Congress not to preempt state “child safety protection” laws in its executive order calling for a national framework on AI regulation. This has paved the way for both red states and blue states to enact regulations on how companion chatbots interact with users, particularly child users.

State lawmakers around the country have held hearings featuring accounts of users who came to rely on chatbot systems as companions, in some cases leading to troubling interactions ranging from self-harm discussions to inappropriate or boundary-crossing exchanges involving minors.

If you're a subscriber, click here for the full edition of this update. Or, click here to learn more about our MultiState.ai+ subscription.